What Is Deep Learning? | A Beginner's Guide

Deep learning is a type of technology that allows computers to simulate how our brains work.

More specifically, it is a method that teaches computers to learn and make decisions independently, without explicitly programming them. Instead of telling a computer exactly what to look for, we show it many examples and let it learn on its own.

By showing a computer lots of photos of German Shepherds, you can train it to analyze these images and learn to recognize German Shepherds on its own. With enough training on different breeds, when you show it a new picture, it can make an educated guess about which dog breed it is.

Deep learning is the technology behind many popular AI applications like chatbots (e.g., ChatGPT), virtual assistants, and self-driving cars.

Table of contents

- How does deep learning work?

- Deep learning vs. machine learning

- What are different types of learning?

- What is the role of AI in deep learning?

- What are some practical applications of deep learning?

- Advantages and limitations of deep learning

- Other interesting articles

- Frequently asked questions about deep learning

How does deep learning work?

Deep learning uses artificial neural networks that mimic the structure of the human brain. Similar to the interconnected neurons in our brain, which send and receive information, neural networks form (virtual) layers that work together inside a computer.

These networks consist of multiple layers of nodes, also known as neurons. Each neuron receives input from the previous layer, processes it, and passes it on to the next layer. In this way, the model gradually learns to recognize increasingly complex patterns in the data.

The adjective “deep” in “deep learning” refers to the use of multiple layers in the network through which the data is processed.

There are different types of neural networks, but in its simplest form, a deep learning neural network contains:

- An input layer. This is where we feed the data such as image or text for processing.

- Multiple hidden layers. These are where most of the learning happens: they learn to identify important patterns and features in the data. As the data flow through the network, the complexity of the patterns and features learned increases. The first hidden layer could learn to detect simple patterns, like the edges of an image, while the last one learns how to detect more complex features like texture or shape, related to the type of object we are trying to recognize. Eventually, the network can determine which features (e.g., muzzle) are most important to tell one breed apart from another.

- An output layer, where the final prediction or classification is made. For example, if the network is trained to recognize dog breeds, the output layer might give the probabilities that the input is a German Shepherd or some other breed.

It is also possible to train a deep learning model to move backwards, from output to input. This process allows the model to calculate errors and make adjustments so that the next predictions or other outputs are more accurate.

Deep learning vs. machine learning

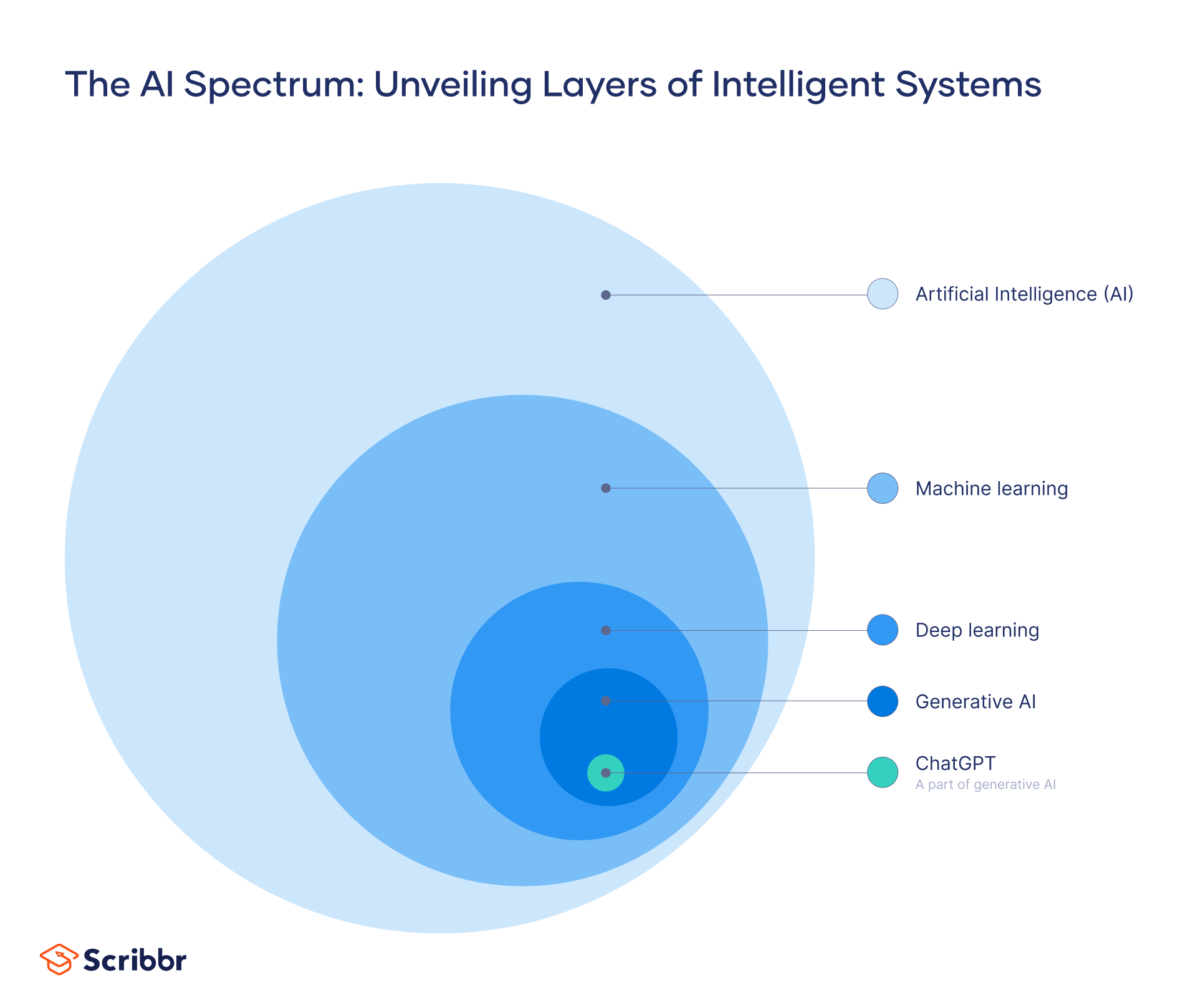

Deep learning is a specialized form of machine learning that was developed to make machine learning more efficient. Essentially, deep learning is an evolution of machine learning.

Machine learning (ML) is a subset of artificial intelligence (AI), the branch of computer science in which machines are taught to perform tasks normally associated with human intelligence, such as decision-making and language-based interaction.

ML is the development of computer programs that can access data and use it to learn for themselves. However, deep learning and ML differ in terms of:

- The type of data they work with

- The methods with which they learn

Traditional ML requires structured, labeled data (e.g., quantitative data in the form of numbers and values). Human experts manually identify relevant features from the data and design algorithms (i.e., a set of step-by-step instructions) for the computer to process those features. ML is more dependent on human intervention to learn.

On the other hand, deep learning models can process unstructured data such as audio files or social media posts, and determine which features distinguish different categories of data from one another, without human intervention. In other words, a deep learning network just needs data and a task description, and it learns how to perform its task automatically.

| Deep learning | Machine learning (ML) |

|---|---|

| A subset of machine learning | A subset of artificial intelligence |

| Requires large datasets for training | Can be trained on small datasets |

| Learns on its own | Human input is needed to correct errors and improve learning |

| Works with unstructured data (e.g., text, audio files, social media posts) | Can only use structured data to make predictions (e.g., dates, names, credit card numbers) |

| Continues to improve as the size of the dataset increases | Reaches a certain level of performance |

| Requires a lot of computational power | Requires less computational power |

What are different types of learning?

Deep learning, just like machine learning, uses several learning techniques:

- Supervised learning is used when the training data consist of labeled examples—i.e., the correct answer is included. For example, a dataset that includes images of different dogs as well as the corresponding dog breed.

- Unsupervised learning is the task of learning from unlabeled data. Instead, the algorithm detects patterns in the data and classifies the information by itself. For example, the dataset could consist of images of different animals but no description (label). The algorithm will learn how to group animals that belong to the same species on its own, by identifying similarities and differences.

- Reinforcement learning (RL) is the task of learning through trial and error. Here, the algorithm learns through rewards and punishments by interacting with its environment. For instance, playing a video game and utilizing this mode of learning, an algorithm can figure out which actions maximize the rewards (i.e., lead to the highest score). Deep reinforcement learning is a specialized form of RL that utilizes deep neural networks to solve more complex problems.

What is the role of AI in deep learning?

While artificial intelligence (AI) is the broad science of using technology to build machines and computers that mimic human abilities (e.g., seeing, understanding, making recommendations), deep learning more specifically imitates the way humans gain certain types of knowledge.

AI provides the overarching framework and concepts that guide deep learning algorithms and models. Within the field of deep learning, AI helps with the definition of goals and objectives, as well as the methods employed to attain them.

AI facilitates the creation and development of neural networks. These neural networks can learn complicated patterns and representations from vast volumes of data. AI provides the principles and techniques necessary to successfully train these networks, allowing them to improve their performance as they learn from additional examples.

Furthermore, AI guides deep learning model evaluation and optimization. It aids in determining which model architecture, parameters, and training procedures are best suited to a particular problem or activity.

ChatGPT, for instance, relies on a type of deep neural network called a large language model (LLM). This network was trained on vast amounts of information from the internet, including websites, books, news articles, and more. ChatGPT draws from this training to generate text, answer questions, and summarize documents.

What are some practical applications of deep learning?

Deep learning has a broad range of applications across various domains, continuously pushing the boundaries of what computers can do. Here are some everyday applications of deep learning.

Personalized recommendations

Video streaming services (e.g., Amazon, Netflix) learn your preferences to offer you suggestions. Each time you indicate that you like a movie or series by watching it to the end or adding it to your library, the service updates its algorithms to feed you more accurate recommendations.

As a student, you may encounter recommendation systems in online learning platforms that suggest relevant courses or study materials based on your interests and learning history.

Natural language processing (NLP)

Computers can understand and process human language with increasing accuracy in spoken and written forms. This ability has a wide range of applications, from chatbots and voice-enabled assistants to automatic text summarization and paraphrasing tools.

In research, NLP-powered sentiment analysis of social media posts can reveal whether people are positive, negative, or neutral towards a brand, a product, or an issue.

Computer vision

Computer vision refers to the computer’s ability to recognize objects and extract useful information from images or videos. Using deep learning, computers can understand what they are “looking at” in a way similar to humans.

This application allows autonomous vehicles to recognize traffic signs and pedestrians, but it is also used in online content moderation to automatically block unsafe or inappropriate content, as well as for virtual try-on experiences in fashion.

Advantages and limitations of deep learning

Deep learning, just like any technology, comes with a set of advantages and limitations. It is important to be aware of these so that you can better understand what deep learning can and cannot do.

Advantages

Deep learning has a number of advantages, such as the following:

- Deep learning algorithms can handle both structured and unstructured data, without relying on a human expert. Deep learning excels at pinpointing complex patterns and relationships in data, making it suitable for tasks like image recognition, natural language processing, and speech recognition.

- It allows for independence in extracting relevant features. Feature extraction is the process of finding and highlighting important patterns or characteristics in data that are relevant for solving a particular task.

- Its accuracy continues to improve over time with more training and more data.

- It can self-correct; after its training, it requires little (if any) human interference.

Limitations

However, deep learning still has some limitations:

- Deep learning insights are only as good as the data we train the model with. Relying on unrepresentative training data or data with flawed information that reflects historical inequalities, some deep learning models may replicate or amplify human biases around ethnicity, gender, age, and so on. This is called algorithmic bias.

- Deep learning models require large computational and storage power to perform complex mathematical calculations. These hardware requirements can be costly. Moreover, compared to conventional machine learning, this approach requires more time to train.

- These models have a so-called “black box” problem. In deep learning models, the decision-making process is opaque and cannot be explained in a way that can be easily understood by humans. If an autonomous vehicle injures a pedestrian, for example, we can’t trace the model’s “thought process” and see exactly what factors led to this mistake.

Other interesting articles

If you want to know more about ChatGPT, AI tools, fallacies, and research bias, make sure to check out some of our other articles with explanations and examples.

ChatGPT

Fallacies

Frequently asked questions about deep learning

- Can deep learning models be biased in their predictions?

-

Deep learning models can be biased in their predictions if the training data consist of biased information. For example, if a deep learning model used for screening job applicants has been trained with a dataset consisting primarily of white male applicants, it will consistently favor this specific population over others.

- What kind of data are needed for deep learning?

-

Deep learning requires a large dataset (e.g., images or text) to learn from. The more diverse and representative the data, the better the model will learn to recognize objects or make predictions. Only when the training data is sufficiently varied can the model make accurate predictions or recognize objects from new data.

- What is knowledge representation and reasoning?

-

Knowledge representation and reasoning (KRR) is the study of how to represent information about the world in a form that can be used by a computer system to solve and reason about complex problems. It is an important field of artificial intelligence (AI) research.

An example of a KRR application is a semantic network, a way of grouping words or concepts by how closely related they are and formally defining the relationships between them so that a machine can “understand” language in something like the way people do.

A related concept is information extraction, concerned with how to get structured information from unstructured sources.

- What is information extraction?

-

Information extraction refers to the process of starting from unstructured sources (e.g., text documents written in ordinary English) and automatically extracting structured information (i.e., data in a clearly defined format that’s easily understood by computers). It’s an important concept in natural language processing (NLP).

For example, you might think of using news articles full of celebrity gossip to automatically create a database of the relationships between the celebrities mentioned (e.g., married, dating, divorced, feuding). You would end up with data in a structured format, something like MarriageBetween(celebrity1,celebrity2,date).

The challenge involves developing systems that can “understand” the text well enough to extract this kind of data from it.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Nikolopoulou, K. (2023, August 04). What Is Deep Learning? | A Beginner's Guide. Scribbr. Retrieved April 22, 2024, from https://www.scribbr.com/ai-tools/deep-learning/

1 comment

Kassiani Nikolopoulou (Scribbr Team)

June 9, 2023 at 2:20 PMThanks for reading! Hope you found this article helpful. If anything is still unclear, or if you didn’t find what you were looking for here, leave a comment and we’ll see if we can help.