Is ChatGPT Safe? | Quick Guide & Tips

ChatGPT, the popular chatbot developed by OpenAI, has become the fastest-growing web platform of all time since its release in November 2022. It uses artificial intelligence (AI) and large language models (LLMs) to analyze patterns in large datasets and produce human-sounding text.

It has over 100 million users and is widely used for tasks like drafting emails, writing articles, and coding. But how safe is the tool?

This article explores OpenAI’s use of personal data, ChatGPT’s security features, and potential risks. It also explains how to use the tool safely.

What data does ChatGPT collect?

OpenAI collects and uses data in various ways. Some of these are discussed below.

User data

Like most online services, OpenAI collects user data (names, email addresses, IP addresses, etc.) to help provide services, communicate with users, and perform analytics to improve the quality of their services. OpenAI does not sell this data or use it to train their tools.

Personal data in training

ChatGPT is trained in part on publicly available data, including the personal information of some people. OpenAI claims to have taken steps to limit the processing of such information when training ChatGPT by excluding websites that contain large amounts of personal data and training the tool to deny requests for sensitive information.

They also claim that individuals have the right to “access, correct, restrict, delete, or transfer their personal information that may be included in our training information.”

However, it’s unclear exactly what information ChatGPT is trained on and whether OpenAI’s use of personal data conflicts with regional privacy laws (in March 2023, Italy temporarily banned the use of ChatGPT over concerns that it did not comply with GDPR regulations).

ChatGPT conversations

OpenAI generally stores ChatGPT conversations to train future models and to resolve issues/bugs. Additionally, these chats may be monitored by human AI trainers.

OpenAI does not sell training information to third parties.

The length of time OpenAI stores conversations for is not clear. They state “We only keep this information for as long as we need it to serve its intended purpose” (this may depend on legal requirements and whether the information is still useful to update their models).

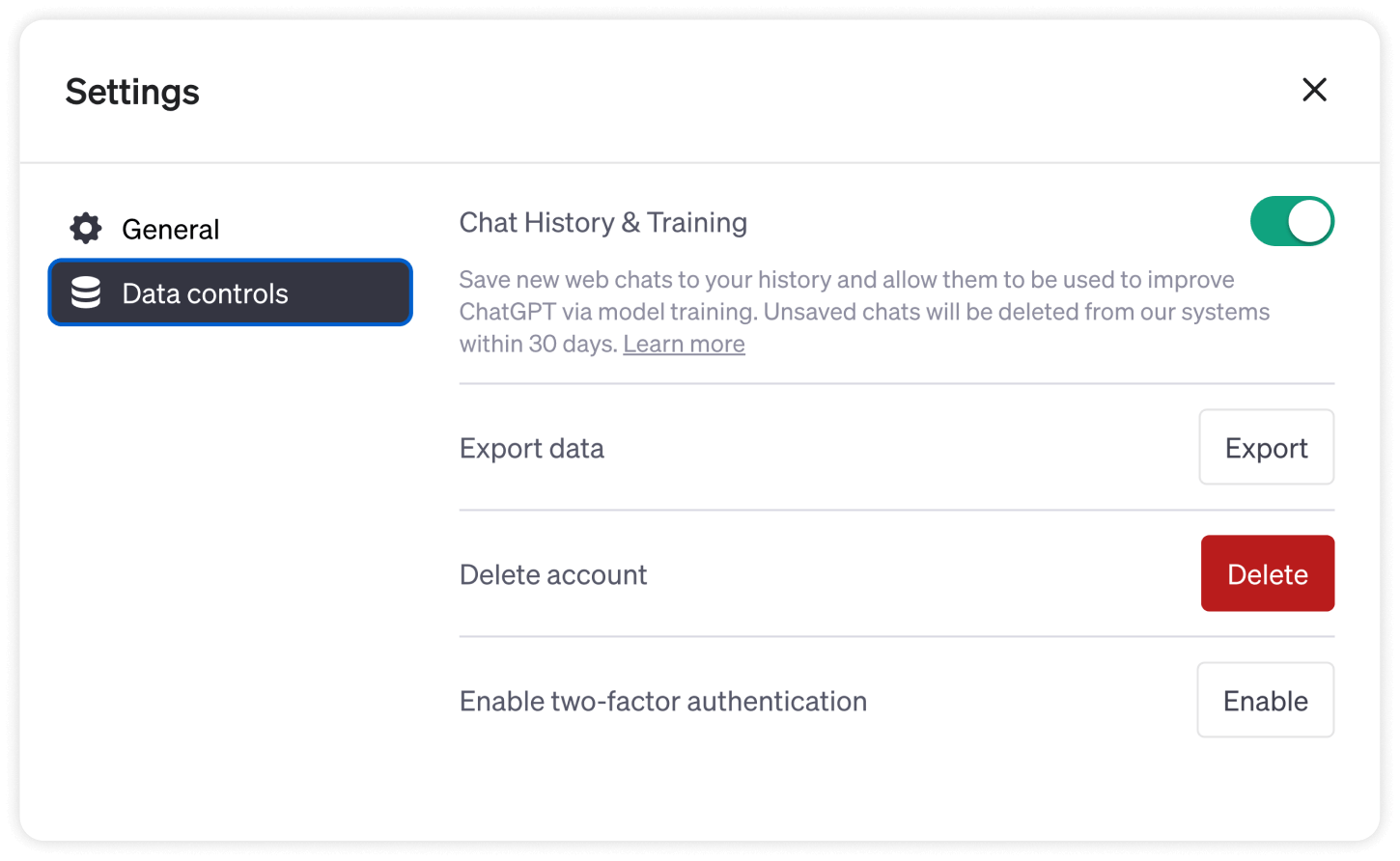

However, users can opt out of having their content used to train ChatGPT. They can also request that OpenAI delete the content of their past ChatGPT conversations. This can take up to 30 days.

OpenAI security measures

While the specific details of their safety measures are not disclosed, OpenAI claims to protect training data in the following ways:

- Technical, physical, and administrative measures: OpenAI uses security measures like access controls, audit logs, read-only permissions, and data encryption to protect training data.

- External security audits: OpenAI is SOC 2 Type 2–compliant, meaning that the company undergoes an annual third-party audit that tests their internal controls and security measures.

- Bug bounty programme: OpenAI encourages ethical hackers and security researchers to test the tool’s security and report potential issues.

- Regional privacy regulation: OpenAI has completed a data protection impact assessment and claims to comply with both GDPR (which protects the privacy and data of EU citizens) and CCPA (which protects the data and privacy of California citizens).

Potential risks when using ChatGPT

Some potential risks of using ChatGPT include:

- Data breaches: Although OpenAI has numerous security measures in place, data breaches can still occur due to security vulnerabilities, bugs, etc. One such instance occurred in March 2023, when a bug caused some users to see the titles of other users’ chats, as well as limited personal and billing information.

- Inputting sensitive information: User inputs are generally saved by ChatGPT to train future models. Therefore, it’s important not to upload sensitive information.

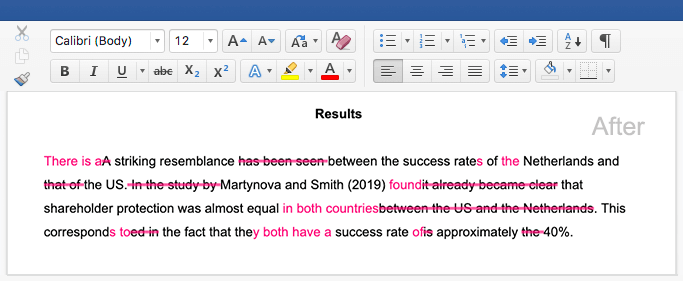

- Inaccurate information and biases: ChatGPT is not always trustworthy and its outputs sometimes contain incorrect answers or biased information. Acting on or publishing such information may result in negative consequences. Always verify the accuracy of ChatGPT responses against a credible source.

- Plagiarism: Passing off AI-generated text as your own work may be considered plagiarism in some contexts. An AI detector may be used to detect this offense.

How to use ChatGPT safely

The following steps can help ensure you use ChatGPT safely.

- Review the privacy policy and data handling measures for yourself: Be aware of any changes and only use the tool if you agree to the stated use of your personal data.

- Only use ChatGPT through the OpenAI website or official app: The official ChatGPT app is currently only available on iOS devices. If you don’t have an iOS device, use the official OpenAI website to access the tool. Don’t risk downloading fraudulent or malicious software.

- Do not input sensitive information: As ChatGPT is trained on user inputs, avoid inputting personal or sensitive information in the tool.

Other interesting articles

If you want to know more about fallacies, research bias, or AI tools, make sure to check out some of our other articles with explanations and examples.

AI tools

Fallacies

Frequently asked questions about the safety of ChatGPT

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Ryan, E. (2023, November 16). Is ChatGPT Safe? | Quick Guide & Tips. Scribbr. Retrieved May 31, 2026, from https://www.scribbr.com/ai-tools/chatgpt-safety/