Comparing Plagiarism Checker Tools | Methods & Results

In seeking to find the best plagiarism checker tool on the market in 2024, we conducted an experiment comparing the performance of 12 checkers, both free and paid.

We focused on a series of factors in our analysis. For each tool, we analyzed the amount of plagiarism it was able to detect, the quality of matches, and its usability and trustworthiness.

This article describes our research process, explaining how we arrived at our findings.

We discuss:

- Which plagiarism checkers we selected

- How we prepared test documents

- How we analyzed quantitative results

- How we selected criteria for qualitative analysis

Plagiarism checkers analyzed

We kicked off our analysis by searching for the main plagiarism checkers on the market that can be purchased by individual users, excluding enterprise software.

All checkers were tested in May/June 2024. These included:

- Scribbr

- Grammarly

- Plagiarism Detector

- PlagAware

- Prepostseo

- Quetext

- Smodin

- DupliChecker

- Compilatio

- Check-Plagiarism

- Small SEO Tools

- Copyleaks

Test documents

The initial unedited document consisted of 140 plagiarized sections from 140 different sources. These were equally distributed across 7 different source types, in order to assess the performance for each source type individually.

The first document was compiled from:

- 20 Wikipedia articles

- 20 big website articles

- 20 news articles

- 20 open-access journal articles

- 20 restricted-access journal articles

- 20 theses and dissertations

- 20 other PDFs

Generally speaking, open- and restricted-access journal articles, theses and dissertations, large websites, and PDFs are more relevant to students and academics. Writers and marketers might be more interested in news articles and websites. Wikipedia articles can be useful for both.

Taking this into account, it is important to distinguish between the various source types. Different users (e.g., students, academics, marketers, writers) use different sources and have different needs with respect to plagiarism checkers.

You can request the test documents we used for our analysis by contacting us through citing@scribbr.com.

Edited test documents

For the next step in our analysis, the unedited test document underwent three levels of editing: light, moderate, and heavy. We wanted to investigate whether each tool was able to find a source text when it had been edited to varying degrees.

- Light: The original copy-pasted source text was edited by replacing 1 word in every sentence.

- Moderate: The original copy-pasted source text was edited by replacing 2–3 words in every sentence, provided the sentence was long enough.

- Heavy: The original copy-pasted source text was edited by replacing 4–5 words in every sentence, provided the sentence was long enough.

We limited our analysis to the source texts that had been detected by all the tools during the first round. Any checker that did not find plagiarism in the original text is likely not able to detect plagiarism if the original text has been altered, so we excluded those sources from analysis.

The edited test documents consisted of 20 source texts from Wikipedia, 20 from big websites, 20 from news articles, 19 from open-access journals, 10 from restricted-access journals, 3 from theses and dissertations, and 12 from PDFs.

Nurses develop a plan of care, working collaboratively with physicians, therapists, the patient, the patient’s family, and other team members that focuses on treating illness to improve quality of life. In the United Kingdom and the United States, clinical nurse specialists and nurse practitioners, diagnose health problems and prescribe the correct medications and other therapies, depending on particular state regulations.

Lightly edited

Nurses develop a plan of care, working collaboratively with physicians, therapists, the patient, the patient’s relatives, and other team members that focuses on treating illness to improve quality of life. In the United Kingdom and the United States, clinical nurse specialists and nurse practitioners, diagnose health problems and prescribe the correct drugs and other therapies, depending on particular state regulations.

Moderately edited

Nurses create a plan of care, working collaboratively with physicians, therapists, the patient, the patient’s relatives, and other team members that focuses on treating sickness to improve quality of life. In the United Kingdom and the United States, clinical nurse specialists and nurse practitioners, diagnose health issues and prescribe the correct drugs and other therapies, depending on particular state regulations.

Highly edited

Nurses create a plan of care, working together with physicians, therapists, the patient, the patient’s relatives, and other team members that focuses on treating sickness to improve quality of life. In the United Kingdom and the United States, clinical nurse specialists and nurse practitioners, diagnose health issues and prescribe the correct drugs and other treatments, depending on particular state rules.

Procedure

We uploaded each version of the document to each plagiarism checker tool for testing. In some instances, we had to split the documents into smaller ones since some checkers have smaller word limits per upload than others. We always avoided dividing a single source text into two documents.

Subsequently, the documents were manually evaluated by looking at each plagiarized paragraph to determine if the checker:

(0) had not been able to attribute any of the sentences to one or multiple sources

(1) had been able to attribute one or multiple sentences to one or multiple sources

(2) had been able to attribute all sentences to one source

This way, we were able to test whether checkers with a high plagiarism percentage actually had been able to find and fully match the source, or if they simply attributed a few sentences of the source text to multiple sources. This method also helped screen for false positives (e.g., highlighting non-plagiarized parts or common phrases as plagiarism).

Data analysis

For the test document containing unedited text, we calculated the total score for each tool and divided this score by the total possible score (which was 280). We then converted this number to a percentage.

We repeated this procedure for the edited text, but this time the total possible score was 208 since we only included the 104 source texts that had been found by all plagiarism checkers.

Results

The following results indicate how much plagiarism the tools were able to detect in the unedited, lightly edited, moderately edited, and heavily edited document.

For the edited categories, we only used sources from the unedited document that were found by all plagiarism checkers during the first round. Therefore, some checkers were able to score more highly in subsequent rounds.

This table is ranked based on the unedited column, but all the results were taken into account in our analysis.

| Plagiarism checker | Paid or free? | Unedited | Lightly edited | Moderately edited | Heavily edited |

|---|---|---|---|---|---|

| Scribbr | Paid (free version doesn’t have full report) | 86% | 96% | 94% | 74% |

| PlagAware | Paid (free trial) | 74% | 80% | 52% | 20% |

| Check-Plagiarism | Free (premium version available) | 55% | 38% | 30% | 23% |

| Small SEO Tools | Free | 49% | 50% | 50% | 39% |

| Compilatio | Paid | 49% | 50% | 49% | 31% |

| DupliChecker | Free (premium version available) | 48% | 50% | 49% | 40% |

| Quetext | Paid (free trial) | 46% | 49% | 53% | 42% |

| Prepostseo | Free (premium version available) | 44% | 45% | 40% | 36% |

| Copyleaks | Paid (free trial) | 38% | 46% | 39% | 30% |

| Grammarly | Paid (free version doesn’t have full report) | 36% | 34% | 22% | 20% |

| Plagiarism Detector | Free (paid version available) | 31% | 39% | 38% | 31% |

| Smodin | Free (paid version available) | 23% | 23% | 10% | 7% |

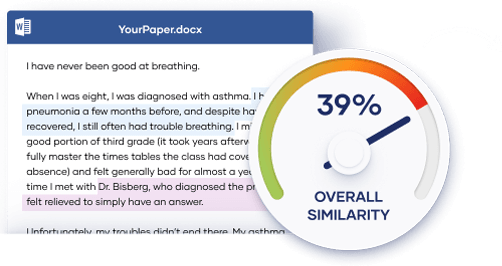

Evaluating the plagiarism checker tools

Our next step was a qualitative analysis, during which quality of matches, usability, and trustworthiness were assessed, with the help of preset criteria. These contributed to a more standardized, objective evaluation. All plagiarism checkers were evaluated the same way.

The selected criteria cover a great deal of users’ needs:

- Quality of matches: It’s crucial for plagiarism checkers to be able to match the entire plagiarized section to the right source. Partial matches, where the checker matches individual sentences to multiple sources, result in a messy, hard-to-interpret report. False positives, where common phrases are incorrectly marked as plagiarism, are also important to consider, because these skew the plagiarism percentages.

- Usability: It’s essential that plagiarism checkers show a clear overview of potential plagiarism issues. The report should be clear and cohesive, with a clean design. It is also important that users can instantly resolve the issues, for example, by adding automatically generated citations.

- Trustworthiness: It’s important for students and academics that their documents are not stored or sold to third parties. This way, they know for sure that the plagiarism check will not result in plagiarism issues when they submit their text to their educational institution or for publication. It is also important that the tool offers customer support if problems occur.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Driessen, K. (2024, October 18). Comparing Plagiarism Checker Tools | Methods & Results. Scribbr. Retrieved June 7, 2026, from https://www.scribbr.com/plagiarism/plagiarism-checker-comparison/